Zhenmei ShiPh.D.

Computer Science

|

|

About Me

I am currently a second-year Ph.D. student majoring in Computer Science at the University of Wisconsin-Madison advised by Prof. Yingyu Liang. I obtained my B.S. degree, majoring in Computer Science and Pure Mathematics Advanced, from the Hong Kong University of Science and Technology in 2019.

I am fortunate to be a returning intern in the Google YouTube Ads Machine Learning team, Mountain View, in summer 2021. I had a wonderful experience in the Google Pixel Camera team, in the summer of 2020. Previously, I was an intern in the Base Model group of Megvii (Face++), Beijing, in the summer of 2019. Also, I had two internships at Tencent YouTu group, Shenzhen, in the winter of 2019 and the winter of 2018.

My research interest includes Machine Learning Theory and Computer Vision.

Publications

|

On Feature Learning in Neural Networks: Emergence from Inputs and Advantage over Fixed Features

Zhenmei Shi, Junyi Wei , Yingyu Liang (under review) We provide new insights on interesting phenomena of the loss landscape and training dynamics, including a wedge-like structure of the low-loss solutions, and the different roles of input structure for shallow and deep networks. |

|

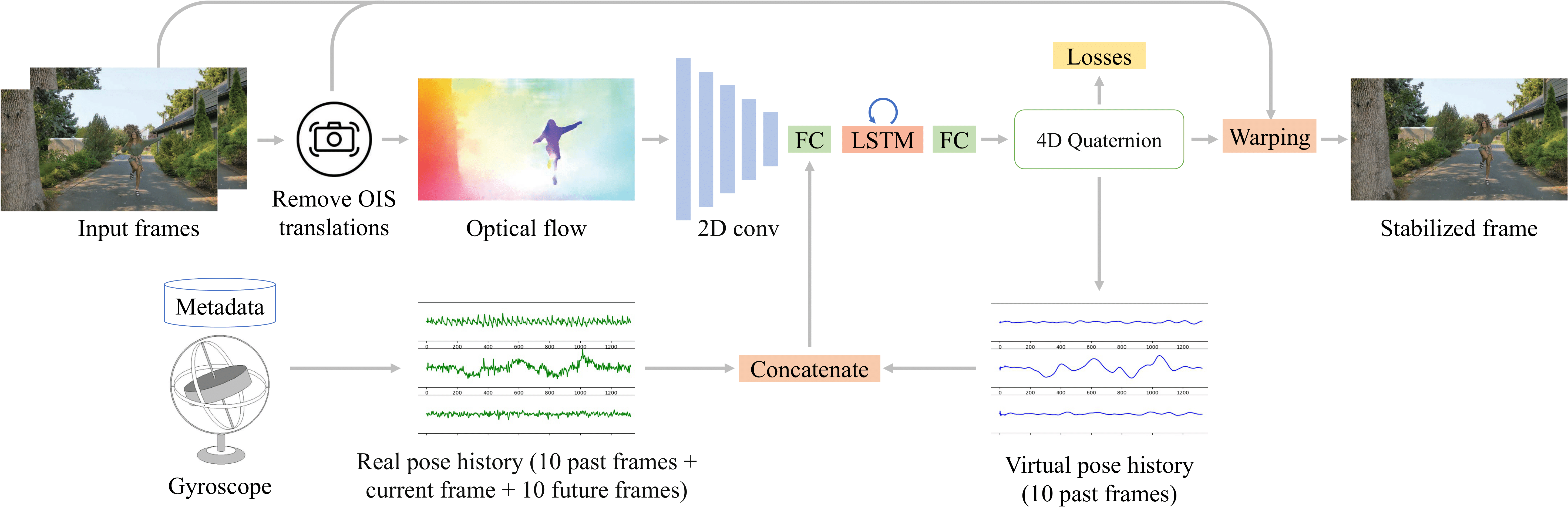

Deep Online Fused Video Stabilization

Zhenmei Shi, Fuhao Shi, Wei-Sheng Lai, Chia-Kai Liang, Yingyu Liang (under review) [ Project ] [ Paper ] We present a deep neural network (DNN) that uses both sensor data (gyroscope) and image content (optical flow) to stabilize videos through unsupervised learning. |

|

Structured Feature Learning for End-to-End Continuous Sign Language Recognition Zhaoyang Yang*, Zhenmei Shi*, Xiaoyong Shen, Yu-Wing Tai arXiv, 2019 [ News ] [ Paper ] Our SF-Net which extracts features in a structured manner and gradually encodes information at the frame, the gloss and the sentence level into the feature representation. |

|

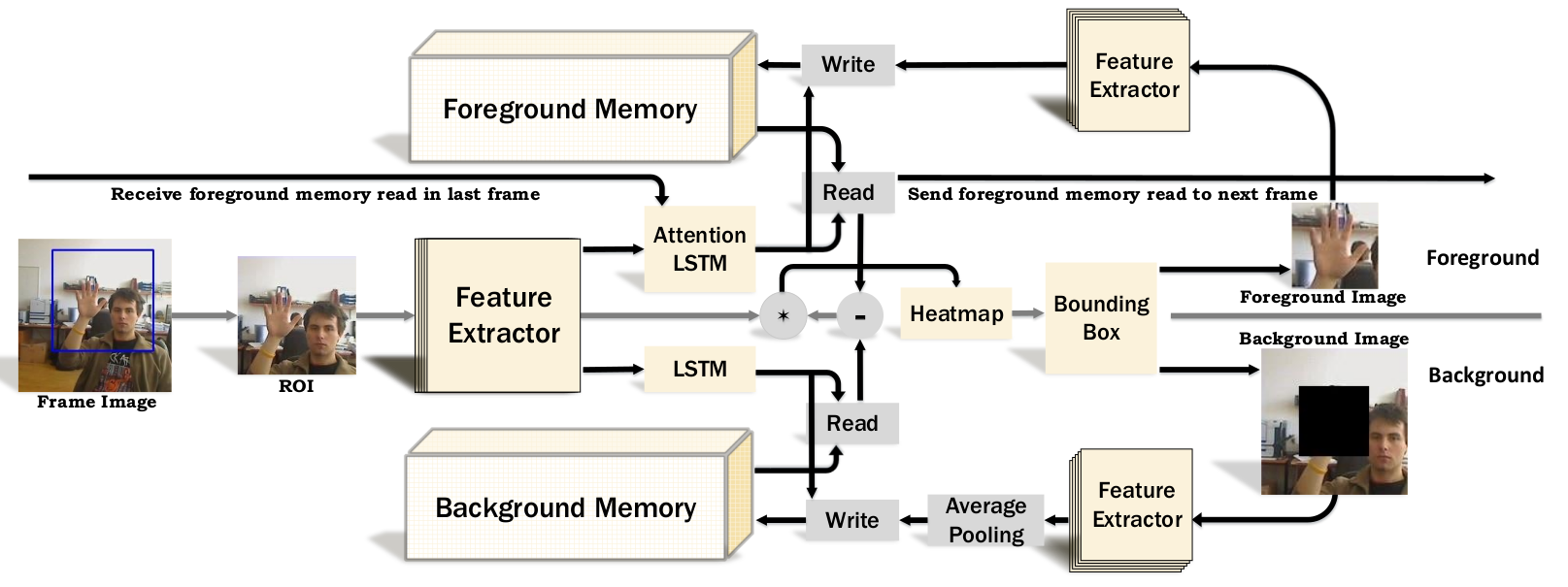

Dual Augmented Memory Network for Unsupervised Video Object Tracking

Zhenmei Shi*, Haoyang Fang*, Yu-Wing Tai, Chi Keung Tang arXiv, 2019 [ Project ] [ Paper ] Our Dual Augmented Memory Network is unique in remembering both target and background, and using an attention LSTM memory to guide the focus on memorized features. |

Technical Projects

|

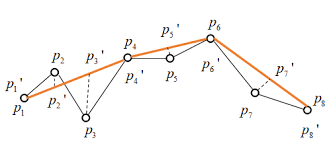

Velocity Vector Preserving Trajectory Simplification

Guanzhi Wang*, Zhenmei Shi*, Cheng Long, Ya Gao, Raymond Wong Technical Report, 2018 [ Code ] [ Paper ] We designed an algorithm to simplify a trajectory such that the number of points is minimized under the constraint that the velocity-based error does not exceed a given tolerance. |

|

Deep Colorization, 2018.

Advisor: Xin Tao and Prof. Yu-Wing Tai [ News ] |

|

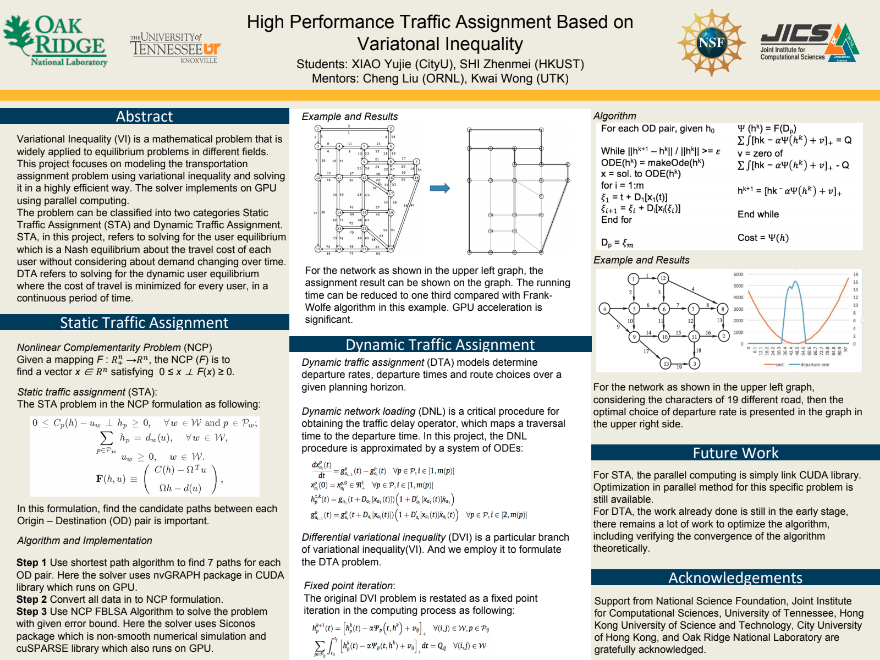

High-Performance Traffic Assignment Based on Variational Inequality, 2017.

Advisor: Dr. Cheng Liu and Prof. Kwai L. Wong [ Poster ] |

Research & Work Experience

|

Research Assistant

University of Wisconsin-Madison 2019 - Now | Prof. Yingyu Liang |

|

Software Engineering Intern

Google in Mountain View, CA Summer 2021 | Myra Nam Summer 2020 | Fuhao Shi |

|

Research Intern

Megvii (Face++) in Beijing Summer 2019 | Xiangyu Zhang |

|

Research Intern

Tencent YouTu in Shenzhen Winter 2019 | Zhaoyang Yang and Prof. Yu-Wing Tai Winter 2018 | Xin Tao and Prof. Yu-Wing Tai |

|

Research Assistant

Hong Kong University of Science and Technology 2018 - 2019 | Prof. Chi Keung Tang 2017 - 2018 | Prof. Raymond Wong Summer 2016 | Prof. Ji-Shan Hu |

|

Research Intern

Oak Ridge National Laboratory in the USA Summer 2017 | Dr. Cheng Liu and Prof. Kwai L. Wong |

Academic Services

|

Conference Reviewer at ICCV 2021 |

|

Conference Reviewer at CVPR 2021 |

|

Conference Reviewer at ECCV 2020 |

Teaching

Teaching Assistant of CS220 (Data Programming I) at UW-Madison (Spring 2020)Teaching Assistant of CS301 (Intro to Data Programming) at UW-Madison (Fall 2019)